Abstract

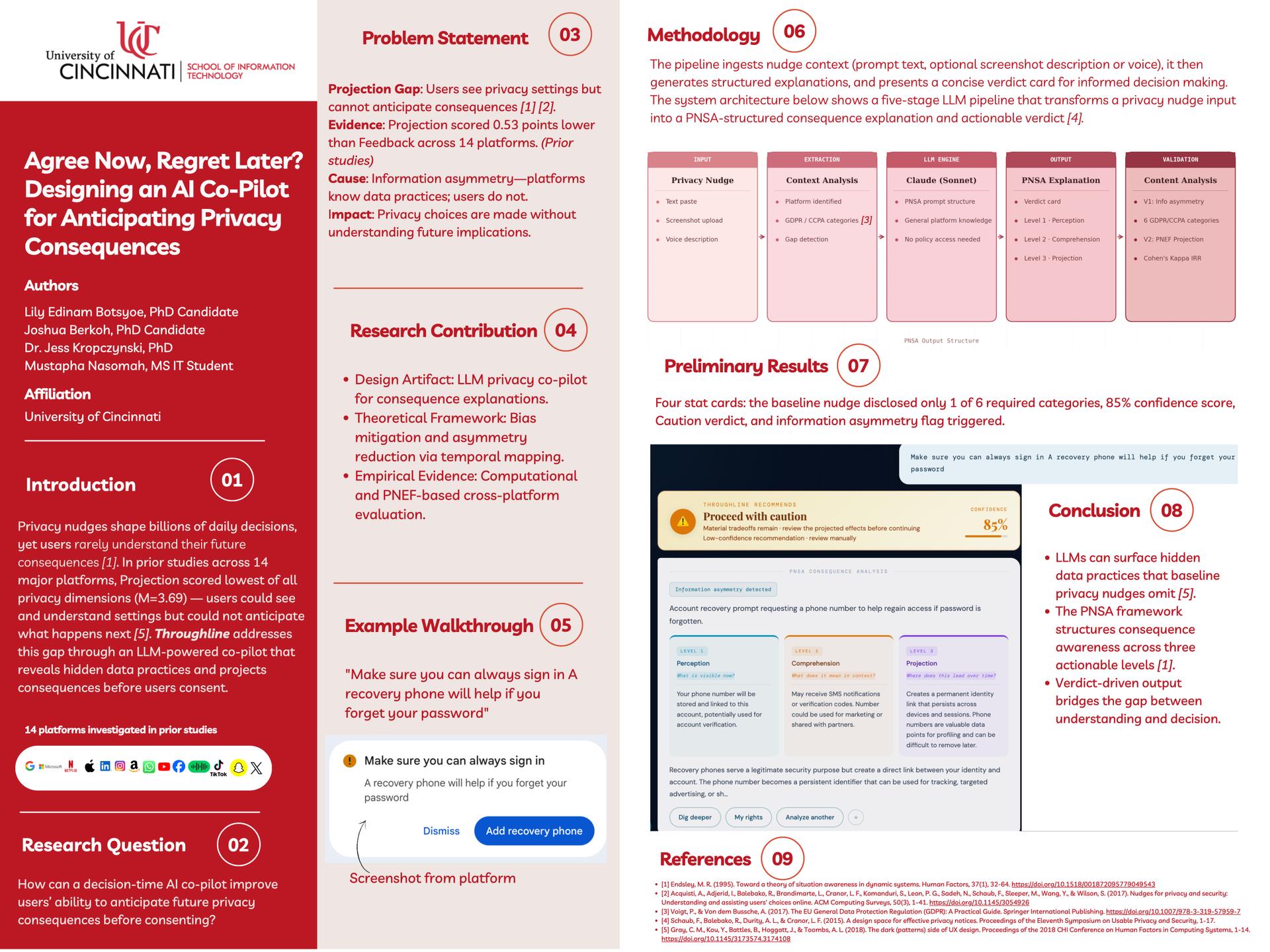

When users configure privacy settings online, they often fail to understand the long-term, compounding consequences of their choices. This "Projection Gap" results in users unknowingly consenting to data practices that misalign with their actual preferences. This research proposes that large language models (LLMs) can bridge this gap. We are designing an AI Co-Pilot interface that provides users with temporal consequence explanations—simulating and narrating the specific future outcomes of a privacy setting before it is saved. Grounded in the Privacy Nudge Evaluation Framework (PNEF), the Co-Pilot will translate complex technical permissions into accessible, scenario-based forecasts. By shifting privacy controls from reactive toggles to proactive, consequence-aware interactions, this tool aims to enhance user comprehension, empower informed decision-making, and promote overall digital well-being.

Authors: Lily Botsyoe; Jess Kropczynski; Mustapha Nasomah